Assistant API → Responses API: A Complete, Practical Migration Guide (with Next.js & Node examples)

A complete developer guide to migrating from OpenAI’s Assistant API to the new Responses API - with streaming, connectors, and production-ready patter

TL;DR: The Responses API gives you simpler request/response semantics, richer streaming events (including function calls), first‑class tool use, and persistent conversations. This guide shows exactly how to migrate a production app—how to handle streaming function calls, switch Threads→Conversations, transform attachments, wire connectors via MCP, support built‑in tools (web search, image generation), and add Whisper for audio. And how to create a robust streaming hook pattern you?

Why Responses API (and what changed)

- Mental model: With Responses, you send input items and receive output items. The API also surfaces granular streaming events for text deltas and tool calls, so you can execute functions as arguments stream in and then resume the model. (OpenAI Platform)

- State: You can manage multi‑turn state with the new Conversations API, or by chaining with

previous_response_idwhen you don’t need long‑lived conversations. (OpenAI Platform) - Tools & connectors: Tools (web search, file search, image generation, code interpreter) and connectors (MCP) are first‑class in Responses. The platform handles approvals by default; you control this when appropriate. (OpenAI Platform)

- Streaming: You can stream text, function‑call arguments, and completions; you can also stream while routing tool calls and then continue the same turn. (OpenAI Platform)

What maps to what (at a glance)

| Old (Assistants) | New (Responses) |

|---|---|

| Threads & Messages | Conversations + responses.create() with input items |

| Runs + polling | Streaming responses and streamed tool calls |

| Function calling | Function tools (same JSON Schema, better streaming UX) |

| File/image attachments | Input items: input_text, input_file, input_image |

| Vector store/file search | File Search tool |

| Third‑party APIs | Connectors (MCP servers) |

Docs: Responses overview & migration guidance. (OpenAI Platform)

Core migration strategy

- **Replace Runs + polling → Responses streaming **(SSE).

- Custom streaming hook that consumes Response events: we accumulate function args, run handlers (parallel), then resume the model with tool outputs.

- Conversations: automatically migrate old IDs to new

conv_*and save the mapping. - Attachments: simple “items” format for text, files, images.

- Tools: we enable built‑ins (web search, image generation, file search) plus connectors (via MCP) based on user selection; and we register custom function tools.

- Audio: transcription with Whisper / gpt‑4o‑transcribe (file or realtime). (OpenAI Platform)

1) Core API route: from polling to streaming

▶ Next.js (Route Handler: app/api/responses/route.ts)

// app/api/responses/route.ts

import OpenAI from "openai";

export const runtime = "edge"; // works well with streaming

const client = new OpenAI({ apiKey: process.env.OPENAI_API_KEY! });

export async function POST(req: Request) {

const body = await req.json();

// Build tools array dynamically (built-ins + connectors + custom functions)

const tools: any[] = [

{ type: "web_search" }, // built-in web search

{ type: "image_generation", model: "gpt-image-1" }, // built-in image gen

// Custom functions are added below (examples later)

];

// Add connectors (MCP) if the caller selected them and you have tokens

if (body.selectedConnectors?.includes("gmail") && body.gmailAccessToken) {

tools.push({

type: "mcp",

server_label: "google_gmail",

connector_id: "connector_gmail",

authorization: body.gmailAccessToken,

// choose an approval mode that fits your UX & risk model

require_approval: "never",

});

}

// Add your custom function tools (schemas shown later)

tools.push(...getCustomFunctionToolDefinitions());

// Conversations: continue or create new one

const conversation =

typeof body.conversationId === "string" && body.conversationId.startsWith("conv_")

? { id: body.conversationId }

: undefined;

// Stream the response (SSE under the hood)

const stream = await client.responses.stream({

model: body.model ?? "gpt-4o",

conversation, // optional: bind to a conversation

previous_response_id: body.prevId, // or chain lightweight state

input: body.input, // items: input_text/input_image/input_file

tools,

});

// OPTIONAL: hook into events server-side to drive function calls, logs, etc.

wireResponseStream(stream, {

onTextDelta: (t) => {/* forward to client logger if desired */},

onFunctionCall: (call) => {/* accumulate args per call_id */},

onFunctionCallArgsDelta: ({ call_id, delta }) => {/* buffer */},

onFunctionCallArgsDone: async (complete) => {

// When arguments for a call_id are complete, run your function

await handleFunctionCall(complete);

},

onError: (e) => console.error(e),

});

// Return as a web stream to the browser

return new Response(await stream.toReadableStream());

}

// --- streaming hook (general-purpose) ---

type StreamHandlers = {

onTextDelta?: (text: string) => void;

onFunctionCall?: (e: any) => void;

onFunctionCallArgsDelta?: (e: any) => void;

onFunctionCallArgsDone?: (e: { call_id: string; name: string; arguments: string }) => void;

onError?: (e: unknown) => void;

};

function wireResponseStream(stream: any, h: StreamHandlers) {

// Text deltas

stream.on("response.output_text.delta", (e: any) => {

h.onTextDelta?.(e.delta);

});

// Function call created (tells you which function & call_id)

stream.on("response.output_item.added", (e: any) => {

if (e.item?.type === "function_call") h.onFunctionCall?.(e.item);

});

// Function call arguments streaming (chunk deltas)

stream.on("response.function_call_arguments.delta", (e: any) => {

h.onFunctionCallArgsDelta?.(e);

});

// Function call arguments completed

stream.on("response.function_call_arguments.done", (e: any) => {

h.onFunctionCallArgsDone?.(e);

});

stream.on("error", (e: any) => h.onError?.(e));

}

// Provide your JSON Schemas separately

function getCustomFunctionToolDefinitions() {

return [

{

type: "function",

name: "get_weather",

description: "Get weather by city name",

parameters: {

type: "object",

properties: { city: { type: "string" }, unit: { type: "string", enum: ["C", "F"] } },

required: ["city"],

},

},

{

type: "function",

name: "lookup_order",

description: "Look up an order by id and return status",

parameters: {

type: "object",

properties: { orderId: { type: "string" } },

required: ["orderId"],

},

},

];

}

Why this works: Responses streaming emits rich events, e.g., response.output_text.delta for text and response.function_call_arguments.* for tool calls—you can read argument chunks, execute your handler when done fires, and then continue the same turn with the tool outputs. (OpenAI Platform)

Docs: Responses streaming, function‑call argument events. (OpenAI Platform)

▶ Node.js (Express)

// server/index.ts

import express from "express";

import OpenAI from "openai";

const app = express();

app.use(express.json());

const client = new OpenAI({ apiKey: process.env.OPENAI_API_KEY! });

app.post("/api/responses", async (req, res) => {

const { model, input, prevId, conversationId, selectedConnectors, gmailAccessToken } = req.body;

const tools: any[] = [

{ type: "web_search" },

{ type: "image_generation", model: "gpt-image-1" },

// Add custom function tool definitions (same as Next.js)

...getCustomFunctionToolDefinitions(),

];

if (selectedConnectors?.includes("gmail") && gmailAccessToken) {

tools.push({

type: "mcp",

server_label: "google_gmail",

connector_id: "connector_gmail",

authorization: gmailAccessToken,

require_approval: "never",

});

}

const stream = await client.responses.stream({

model: model ?? "gpt-4o",

input,

tools,

conversation: conversationId?.startsWith("conv_") ? { id: conversationId } : undefined,

previous_response_id: prevId,

});

// Pipe SSE directly to client

res.setHeader("Content-Type", "text/event-stream");

res.setHeader("Cache-Control", "no-cache");

res.flushHeaders();

// Forward the SSE bytes

const reader = await stream.toReadableStream();

const readerStream = reader.getReader();

try {

while (true) {

const { done, value } = await readerStream.read();

if (done) break;

res.write(value);

}

} finally {

res.end();

}

});

app.listen(3000, () => console.log("Listening on http://localhost:3000"));

function getCustomFunctionToolDefinitions() {

return [

{

type: "function",

name: "get_weather",

description: "Get weather by city name",

parameters: {

type: "object",

properties: { city: { type: "string" }, unit: { type: "string", enum: ["C", "F"] } },

required: ["city"],

},

},

{

type: "function",

name: "lookup_order",

description: "Look up an order by id and return status",

parameters: {

type: "object",

properties: { orderId: { type: "string" } },

required: ["orderId"],

},

},

];

}

2) Handling function calls (including parallel custom tools)

Flow:

- Model decides to call function(s).

- You receive streaming arg deltas, then a

donewith the full JSON args. - Execute your function(s)—in parallel when safe.

- Resume the same turn by sending tool outputs back (correlated to the

call_id). - The model reads your outputs and finishes the answer.

In Responses, tool calls and tool outputs are separate item types linked by a

call_id. You can chain viaprevious_response_idor by passing tool outputs as new input items. (OpenAI Platform)

▶ Next.js — parallel tool execution + resume

// A lightweight in-memory accumulator for call args

const pending: Record<string, { name: string; json: any }> = {};

async function handleFunctionCall(e: { call_id: string; name: string; arguments: string }) {

const args = JSON.parse(e.arguments || "{}");

pending[e.call_id] = { name: e.name, json: args };

}

// After multiple calls reach *.done you can dispatch parallel work and resume:

async function completeToolPhaseAndResume(params: {

// id from the streaming response (the turn to resume)

previous_response_id: string;

client: OpenAI;

}) {

// Fan out parallel executions

const entries = Object.entries(pending);

const results = await Promise.all(

entries.map(async ([call_id, { name, json }]) => {

let output: unknown;

if (name === "get_weather") {

output = await weatherService(json.city, json.unit);

} else if (name === "lookup_order") {

output = await orderService(json.orderId);

} else {

output = { error: `Unknown tool ${name}` };

}

return { call_id, output };

}),

);

// Clear local buffer for next turn

for (const key of Object.keys(pending)) delete pending[key];

// Send tool results back to the model and let it finish the turn.

// Pattern A: pass tool outputs as inputs correlated by call_id.

// (This mirrors the "items" model described in the migration guide.)

const resumed = await params.client.responses.create({

previous_response_id: params.previous_response_id,

input: results.map((r) => ({

role: "tool",

content: [

{

type: "tool_result",

call_id: r.call_id, // correlate to the original call

output_text: JSON.stringify(r.output),

},

],

})),

});

return resumed;

}

Docs: Streaming events (incl.

response.function_call_arguments.delta/.done) and the Responses migration guide explaining items & call correlation. (OpenAI Platform)

Parallelism: Function calling supports parallel tool calls—invoke multiple services concurrently and post all results before resuming. (OpenAI Platform)

▶ Node.js (Express)

Implementation is the same idea as the Next.js sample above—accumulate arg chunks per call_id, run handlers in parallel, then responses.create({ previous_response_id, input: [tool_result…] }) to finalize the turn.

3) Web search and image generation as built‑in tools

Enable the built‑ins by adding them to tools:

const tools = [

{ type: "web_search" }, // lets the model search the web

{ type: "image_generation", model: "gpt-image-1" }, // generate/edit images

];

- The web search tool lets models fetch real‑time context inside a single call. It’s configured like any tool and the model decides when to use it. (OpenAI Platform)

- The image generation tool uses

gpt-image-1from the Responses API. (OpenAI Platform)

4) Connectors (MCP) — e.g., Gmail

Connectors let a model call third‑party services (Gmail, Dropbox, etc.) via MCP. Add a connector tool only if the user opted in and you hold a valid token.

▶ Next.js

// When building tools[]

if (selectedConnectors.includes("gmail") && gmailAccessToken) {

tools.push({

type: "mcp",

server_label: "google_gmail",

connector_id: "connector_gmail",

authorization: gmailAccessToken,

// choose approval mode for your UX:

// "auto" (default approvals), "never", or "always" depending on risk profile

require_approval: "never",

});

}

- By default, the platform prompts for approvals before any data is sent to a connector/MCP server; configure approval flow based on your trust model. (OpenAI Platform)

- MCP is the protocol; connectors are first‑party integrations exposed via the same tool surface as remote MCP servers. (OpenAI Platform)

5) Attachments and inputs (images/files/text)

Under Responses, you send items instead of Message arrays. Typical patterns:

// Images only

input: [{

role: "user",

content: [

{ type: "input_text", text: "Compare these images" },

{ type: "input_image", image_url: "file:file-abc123" },

{ type: "input_image", image_url: "file:file-def456" },

],

}]

// Files only

input: [{

role: "user",

content: [

{ type: "input_text", text: "What is in this PDF?" },

{ type: "input_file", file_id: "file-xyz789" },

],

}]

// Mixed

input: [{

role: "user",

content: [

{ type: "input_text", text: "Analyze this data" },

{ type: "input_image", image_url: "file:file-abc123" },

{ type: "input_file", file_id: "file-xyz789" },

],

}]

- PDFs, images and other files can be sent via file IDs you uploaded to

/v1/files. (OpenAI Platform) - Vision & image docs cover

input_imageusage; Responses supports both analysis and generation paths. (OpenAI Platform)

6) Threads → Conversations (automatic migration)

We migrated Assistant “threads” to Conversations:

▶ Next.js

import OpenAI from "openai";

const client = new OpenAI({ apiKey: process.env.OPENAI_API_KEY! });

export async function migrateThreadIdIfNeeded(oldId?: string) {

if (!oldId || oldId.startsWith("conv_")) return oldId;

const conv = await client.conversations.create({

metadata: {

migratedFrom: oldId,

migrationDate: new Date().toISOString(),

},

// Optionally: seed with prior context as items

// items: [...]

});

// Persist conv.id to your DB (map old->new)

await saveConversationMapping(oldId, conv.id);

return conv.id;

}

- Conversations are first‑class and integrate with Responses; you can also chain calls with

previous_response_idfor lighter‑weight state. (OpenAI Platform)

7) Audio transcription (Whisper / GPT‑4o Transcribe)

▶ Node.js (server)

import fs from "node:fs";

import OpenAI from "openai";

const client = new OpenAI({ apiKey: process.env.OPENAI_API_KEY! });

async function transcribe(filePath: string) {

const file = fs.createReadStream(filePath);

// Option A: Latest transcribe model

const t1 = await client.audio.transcriptions.create({

model: "gpt-4o-mini-transcribe",

file,

response_format: "json",

});

// Option B: Whisper (still supported)

const t2 = await client.audio.transcriptions.create({

model: "whisper-1",

file,

});

return { t1, t2 };

}

- See Speech‑to‑Text & model docs (Whisper, GPT‑4o‑mini‑transcribe). (OpenAI Platform)

- For realtime transcription, use the Realtime API with transcription sessions (WebSocket/WebRTC). (OpenAI Platform)

8) Production‑ready streaming hook (what it listens for)

Our hook processes these events:

response.output_text.delta→ stream tokens to UIresponse.output_item.added(whenitem.type === "function_call") → start buffering argsresponse.function_call_arguments.delta→ append arg chunksresponse.function_call_arguments.done→ run your handler(s); if multiple calls, run in parallel- (optional)

response.completed/ errors for cleanup

These event names come from the Responses streaming interface. You’ll find the function‑call argument delta/done events in the API reference. (OpenAI Platform)

Next.js client hook (brief & functional)

// app/hooks/useResponseStream.ts

"use client";

import { useEffect, useRef, useState } from "react";

type Call = { name: string; args: any };

export function useResponseStream(endpoint = "/api/responses") {

const [text, setText] = useState("");

const [calls, setCalls] = useState<Call[]>([]);

const [status, setStatus] = useState<"idle"|"streaming"|"done"|"error">("idle");

const esRef = useRef<EventSource|null>(null);

const argBuffer = useRef<string>("");

const currentFn = useRef<string>("");

function start(prompt: string) {

stop();

setText(""); setCalls([]); setStatus("streaming");

const url = endpoint + "?q=" + encodeURIComponent(prompt);

const es = new EventSource(url); esRef.current = es;

es.onmessage = (ev) => {

try {

const e = JSON.parse(ev.data);

switch (e.type) {

case "response.output_text.delta":

setText(t => t + (e.delta ?? ""));

break;

case "response.output_item.added":

if (e.item?.type === "function_call") { currentFn.current = e.item?.name ?? ""; argBuffer.current = ""; }

break;

case "response.function_call_arguments.delta":

argBuffer.current += e.delta ?? "";

break;

case "response.function_call_arguments.done":

setCalls(a => [...a, { name: currentFn.current, args: safe(argBuffer.current) }]);

argBuffer.current = ""; currentFn.current = "";

break;

case "response.completed":

setStatus("done"); stop();

break;

}

} catch { /* ignore parse errors */ }

};

es.onerror = () => { setStatus("error"); stop(); };

}

function stop() { esRef.current?.close(); esRef.current = null; }

useEffect(() => () => stop(), []);

return { text, calls, status, start, stop };

}

function safe(s: string) { try { return JSON.parse(s || "{}"); } catch { return {}; } }

Use

callsto trigger your tool router in the UI (or forward to the server). Keep the hook UI-only: no secrets here.

9) Robust tool configuration (built‑ins, connectors, custom)

Built‑ins you’ll likely enable:

web_search(for real‑time info) (OpenAI Platform)image_generation(withgpt-image-1) (OpenAI Platform)file_search(for RAG over your uploaded files) (OpenAI Platform)code_interpreter(sandboxed Python, data viz, light image ops) (OpenAI Platform)

Connectors (MCP):

- Add them conditionally, pass auth tokens, consider approval policy. (OpenAI Platform)

Custom functions:

- Define via JSON Schema; keep inputs tight, validate outputs, and cap payload size (we cap response JSON around a few hundred KB to protect streaming/UI).

- For multi‑tool steps, parallelize and submit results together before resuming. (OpenAI Platform)

10) Error handling & ops check‑list

- Streaming resilience: timeouts, backoff, and “complete tool phase then resume” patterns for flaky networks.

- Approvals & privacy: for MCP/connector calls, set approval behavior deliberately; avoid logging tokens or PII. (OpenAI Platform)

- Conversations: persist

conv_*IDs, and if you chain withprevious_response_id, store the lastresponse.idper user/thread. (OpenAI Platform) - Attachments: store OpenAI file IDs and any derived metadata; always generate

input_*items server‑side. (OpenAI Platform) - Web search: budget for per‑call search tool costs when the model elects to search. (OpenAI Platform)

End‑to‑end example: one call using web search, image gen, custom tools & Gmail connector (MCP)

▶ Next.js

// app/api/chat/route.ts

import OpenAI from "openai";

export const runtime = "edge";

const client = new OpenAI({ apiKey: process.env.OPENAI_API_KEY! });

export async function POST(req: Request) {

const body = await req.json();

const tools: any[] = [

{ type: "web_search" },

{ type: "image_generation", model: "gpt-image-1" },

...customToolDefs(), // your function tools

];

if (body.selectedConnectors?.includes("gmail") && body.gmailAccessToken) {

tools.push({

type: "mcp",

server_label: "google_gmail",

connector_id: "connector_gmail",

authorization: body.gmailAccessToken,

require_approval: "never",

});

}

const stream = await client.responses.stream({

model: "gpt-4o",

conversation: body.conversationId ? { id: body.conversationId } : undefined,

input: [

{

role: "user",

content: [

{ type: "input_text", text: body.prompt },

...(body.imageFileIds || []).map((id: string) => ({ type: "input_image", image_url: `file:${id}` })),

...(body.fileIds || []).map((id: string) => ({ type: "input_file", file_id: id })),

],

},

],

tools,

});

// Optionally: intercept streaming events and handle custom tools (as earlier)

// return streaming to client

return new Response(await stream.toReadableStream());

}

function customToolDefs() {

return [

{

type: "function",

name: "fetch_price_quote",

description: "Fetches a price quote by SKU and currency",

parameters: {

type: "object",

properties: { sku: { type: "string" }, currency: { type: "string" } },

required: ["sku", "currency"],

},

},

{

type: "function",

name: "create_support_ticket",

description: "Create a ticket with subject and body",

parameters: {

type: "object",

properties: { subject: { type: "string" }, body: { type: "string" } },

required: ["subject", "body"],

},

},

];

}

Web search & image generation are built‑ins; Gmail is added via the MCP connector surface; custom functions use JSON Schema. (OpenAI Platform)

▶ Node.js (Express)

Same API surface as the Next.js example; the difference is streaming the ReadableStream into the HTTP response.

GPT-5 + Markdown (important cookbook note)

📘 Note — GPT-5 and Markdown formatting The OpenAI Cookbook states: “By default, GPT-5 in the API does not format its final answers in Markdown …” — so if you want Markdown, ask for it explicitly in your system/instructions (e.g., “Respond in GitHub-flavored Markdown with headings and code blocks”). (GitHub)

Tip: Set a global system prompt like:

“Assistant must answer in Markdown, using # headings, fenced code blocks with language tags, and short paragraphs.”

Migration checklist (copy/paste)

- Replace Assistants route(s) with a Responses route (streaming by default). (OpenAI Platform)

- Add a custom streaming hook for

response.output_text.*andresponse.function_call_arguments.*event handling. (OpenAI Platform) - Switch to Conversations (migrate old IDs →

conv_*) or useprevious_response_idwhen long‑lived state isn’t required. (OpenAI Platform) - Transform attachments to Responses input items (

input_text,input_image,input_file). (OpenAI Platform) - Register built‑ins (web search, image generation, etc.) via

tools. (OpenAI Platform) - Wire connectors (MCP) and approval policy; pass OAuth tokens securely. (OpenAI Platform)

- Implement custom functions with strict JSON Schemas; support parallel tool calls; resume with tool results. (OpenAI Platform)

- Add audio transcription (file or realtime) if needed. (OpenAI Platform)

- Harden error handling: retries, timeouts, streaming cleanup, and safe fallbacks.

Tips & gotchas

- Prefer Conversations when you want durable state and analytics; use

previous_response_idto chain ad‑hoc turns. Noteprevious_response_idonly carries the last turn’s context unless you keep chaining. (OpenAI Platform) - Treat tool outputs as data, not UI—cap size, sanitize, and log carefully.

- For connectors, opt‑in per user and avoid logging tokens; match your approval mode to the data risk. (OpenAI Platform)

- For file inputs, upload to Files API, persist file IDs, and generate

input_file/input_imageon the server. (OpenAI Platform)

Appendix: Minimal input builders (client utilities)

(No server call, so one tab)

▶ Next.js / TypeScript

export function toInputItems(args: {

text?: string;

imageFileIds?: string[];

fileIds?: string[];

}) {

const content: any[] = [];

if (args.text) content.push({ type: "input_text", text: args.text });

(args.imageFileIds ?? []).forEach((id) => content.push({ type: "input_image", image_url: `file:${id}` }));

(args.fileIds ?? []).forEach((id) => content.push({ type: "input_file", file_id: id }));

return [{ role: "user", content }];

}

FAQs

1) Do I need Conversations or is previous_response_id enough?

Use Conversations for durable threads/analytics. Use previous_response_id for lightweight chaining; it references prior context without storing a long-lived conversation server-side. (Microsoft Learn)

2) Where do I get event names like response.output_text.delta and response.function_call_arguments.done?

They’re part of the Responses streaming interface and appear in SDK docs and references. Subscribe to them when using responses.stream(…). (OpenAI Platform)

3) How do hosted tools differ from my function tools?

Hosted tools (web search, file search, image gen, code interpreter) run on OpenAI infra and are enabled via tools. Function tools are your JSON-schema functions executed in your environment. (OpenAI GitHub)

4) How do I force Markdown output from GPT-5? Add an instruction: “Always respond in Markdown (GFM) with headings and code fences.” By default, GPT-5 does not format answers as Markdown in the API. (GitHub)

5) Is Whisper deprecated if gpt-4o-mini-transcribe exists?

No — Whisper is still available; many teams prefer gpt-4o-mini-transcribe for accuracy/latency trade-offs. Evaluate both. (OpenAI Platform)

References

- Streaming responses & event types. (OpenAI Platform)

- Function calling in Responses (incl. parallel). (OpenAI Platform)

- Conversations API & conversation state. (OpenAI Platform)

- Migrate to Responses (items, tools, chaining). (OpenAI Platform)

- Web search & tools overview. (OpenAI Platform)

- Images & vision; image generation tool. (OpenAI Platform)

- Files/PDF inputs. (OpenAI Platform)

- Connectors/MCP & approvals. (OpenAI Platform)

- Speech‑to‑text (Whisper & gpt‑4o‑mini‑transcribe). (OpenAI Platform)

Final word

Migrating to the Responses API simplifies your mental model and unlocks stronger agentic patterns: streamed function calls, persistent conversations, built‑ins, and connectors—all in one request. Use the patterns above (especially the streaming hook + parallel tool calls) and you’ll have a robust, production‑ready setup.

Subscribe to my newsletter

Read articles from Tech With Asim | Learn, Build, Lead directly inside your inbox. Subscribe to the newsletter, and don't miss out.

More Articles

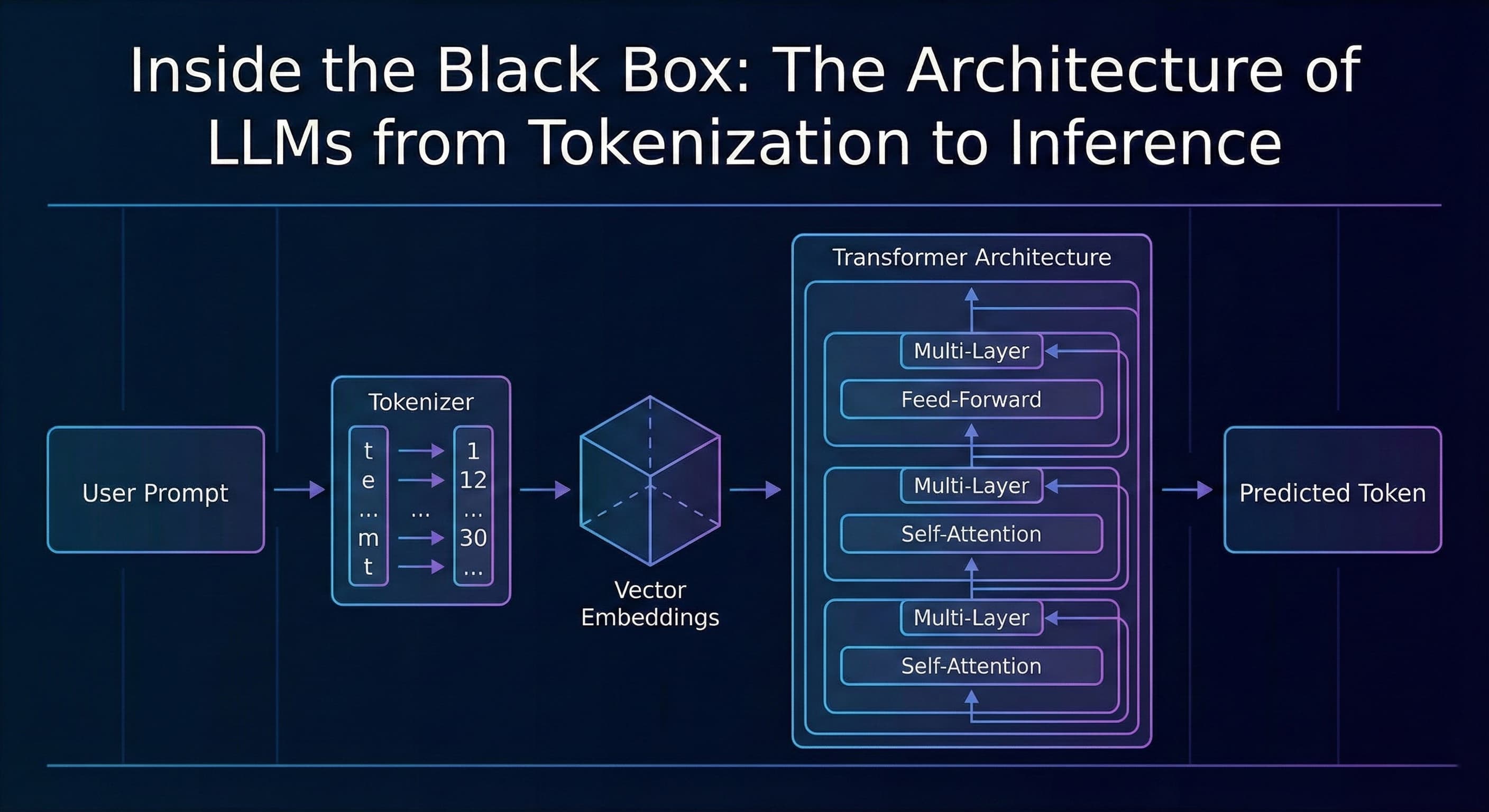

Inside the Black Box: The Architecture of LLMs from Tokenization to Inference

TL;DR Most developers treat AI as a "black box", we send a JSON payload to an API and get a string response. This article breaks down what happens inside that box: from tokenization and vector embeddings to the Transformer architecture and the probab...

Vibe Coding: The Future Every Software Engineer Should Embrace

Introduction We have all seen how fast AI is evolving. From code generation to scaffolding entire modules, tools like ChatGPT, GitHub Copilot, and others are already part of many engineers daily routines. But one approach that stands out and is rapid...

Run DeepSeek R1 Locally with LM Studio – Complete Guide

Introduction AI is evolving rapidly, and while cloud-based AI services are great, they come with privacy concerns, API limits, and server downtime. What if you could run DeepSeek R1 on your own machine without depending on external servers? In this g...