Run DeepSeek R1 Locally with LM Studio – Complete Guide

Download LM studio and run DeepSeek R1 Model on your local machine

Introduction

AI is evolving rapidly, and while cloud-based AI services are great, they come with privacy concerns, API limits, and server downtime. What if you could run DeepSeek R1 on your own machine without depending on external servers?

In this guide, I will walk you through installing LM Studio and running DeepSeek R1 1.5B model locally on Windows, macOS, and Linux. If you have ever wanted to experiment with AI models on your own machine, this guide is for you.

What is LM Studio?

LM Studio is an open-source tool that allows you to run large language models (LLMs) locally on your computer. It provides an easy interface to download, manage, and run AI models without needing advanced technical knowledge.

Why Use LM Studio?

-

No Internet Required – Run AI models offline on your machine

-

No API Limits – Use AI without any restrictions

-

Full Privacy – Your queries and data stay on your device

-

Supports Multiple Models – Run DeepSeek AI, Mistral, LLaMA, Falcon, and more

Installing LM Studio on Windows, macOS, and Linux

Installing LM Studio on Windows

-

Download LM Studio from the official website: LM Studio Download

-

Run the installer (.exe file) and follow the on-screen instructions

-

Once installed, open LM Studio

📌 Tip: If you are using a GPU, make sure your drivers are up to date for better performance.

Installing LM Studio on macOS

-

Open Terminal and install Homebrew (if not already installed):

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)" -

Install LM Studio using Homebrew:

brew install lm-studio -

Launch LM Studio from the Applications folder or Terminal:

lm-studio

📌 Tip: Mac users with Apple Silicon (M1/M2/M3) should use Metal acceleration for better AI model performance.

Installing LM Studio on Linux

-

Open Terminal and update your package manager:

sudo apt update && sudo apt upgrade -y -

Download LM Studio from the official site or install using Flatpak:

flatpak install flathub ai.lmstudio.LMStudio -

Run LM Studio:

flatpak run ai.lmstudio.LMStudio

📌 Tip: Some Linux distros may require additional CUDA dependencies for NVIDIA GPUs.

Downloading and Running DeepSeek R1 Model

Once LM Studio is installed, you need to download the DeepSeek R1 model and run it locally.

Download DeepSeek R1 Model

-

Open LM Studio

-

Go to the Model Catalog

-

Search for DeepSeek R1 1.5B

-

Click Download

📌 Tip: DeepSeek AI 1.5B model is lightweight compared to larger models like DeepSeek R1 7B or 67B, making it ideal for local experiments.

Running DeepSeek R1 Locally

Once the model is downloaded, follow these steps:

-

Open LM Studio

-

Navigate to the Local Models tab

-

Select DeepSeek R1 1.5B

-

Click Run Model

📌 Tip: If you have a powerful GPU, enable CUDA (for NVIDIA) or Metal (for Mac) for better performance.

Using DeepSeek AI for Local Queries

After the model is running, you can interact with DeepSeek AI directly:

Querying DeepSeek AI in LM Studio

Simply type your question in the chatbox and hit enter:

"What are the latest trends in AI development?"

DeepSeek AI will process your request locally without requiring an internet connection.

Using DeepSeek AI in Developer Mode (API Access)

If you want to integrate DeepSeek AI into your own applications, use LM Studio’s API mode:

Python Code to Use DeepSeek AI Locally

import requests

url = "http://localhost:8080/api/v1/chat"

data = {"prompt": "Generate Python code for a web scraper"}

response = requests.post(url, json=data)

print(response.json())

📌 Tip: You can deploy this locally for company-wide AI automation while keeping all data private.

Why Do We Need Local AI Models?

Running AI models locally has several advantages over cloud-based services like ChatGPT, DeepSeek, or OpenAI APIs:

-

Data Privacy – No information leaves your device

-

No Server Downtime – Unlike DeepSeek’s busy servers, local models are always available

-

Zero API Costs – AI inference is free on your own hardware

-

Custom Fine-Tuning – Modify AI models for specific tasks

However, there’s a trade-off: Larger AI models require massive hardware, making full-scale LLM deployment difficult on consumer devices.

📌 Example: DeepSeek AI’s 67B model requires a 480GB GPU, which is impractical for home use.

Final Thoughts & Next Steps

DeepSeek R1 1.5B model with LM Studio is a great way to experiment with AI locally without relying on external servers. While it may not match the performance of cloud-based LLMs, it provides greater control, privacy, and availability.

If you want to run larger AI models, consider upgrading your GPU, using cloud GPUs, or setting up AI clusters.

For more details watch this video

📌 What’s Next?

I’m working on setting up DeepSeek AI locally with a frontend AI agent—but due to hardware limitations (AMD ROCm compatibility issues), I faced some challenges.

Stay tuned for updates!

📌 Subscribe to my YouTube channel for more tutorials & insights: Tech With Asim YouTube

Would you like to see more tutorials on AI ? Let me know in the comments!

#DeepSeekAI #LMStudio #RunAIModelsLocally #TechWithAsim #SelfHostedAI #ArtificialIntelligence #AIForDevelopers #PrivateAI #NoCloudAI #OfflineAI

Subscribe to my newsletter

Read articles from Tech With Asim | Learn, Build, Lead directly inside your inbox. Subscribe to the newsletter, and don't miss out.

More Articles

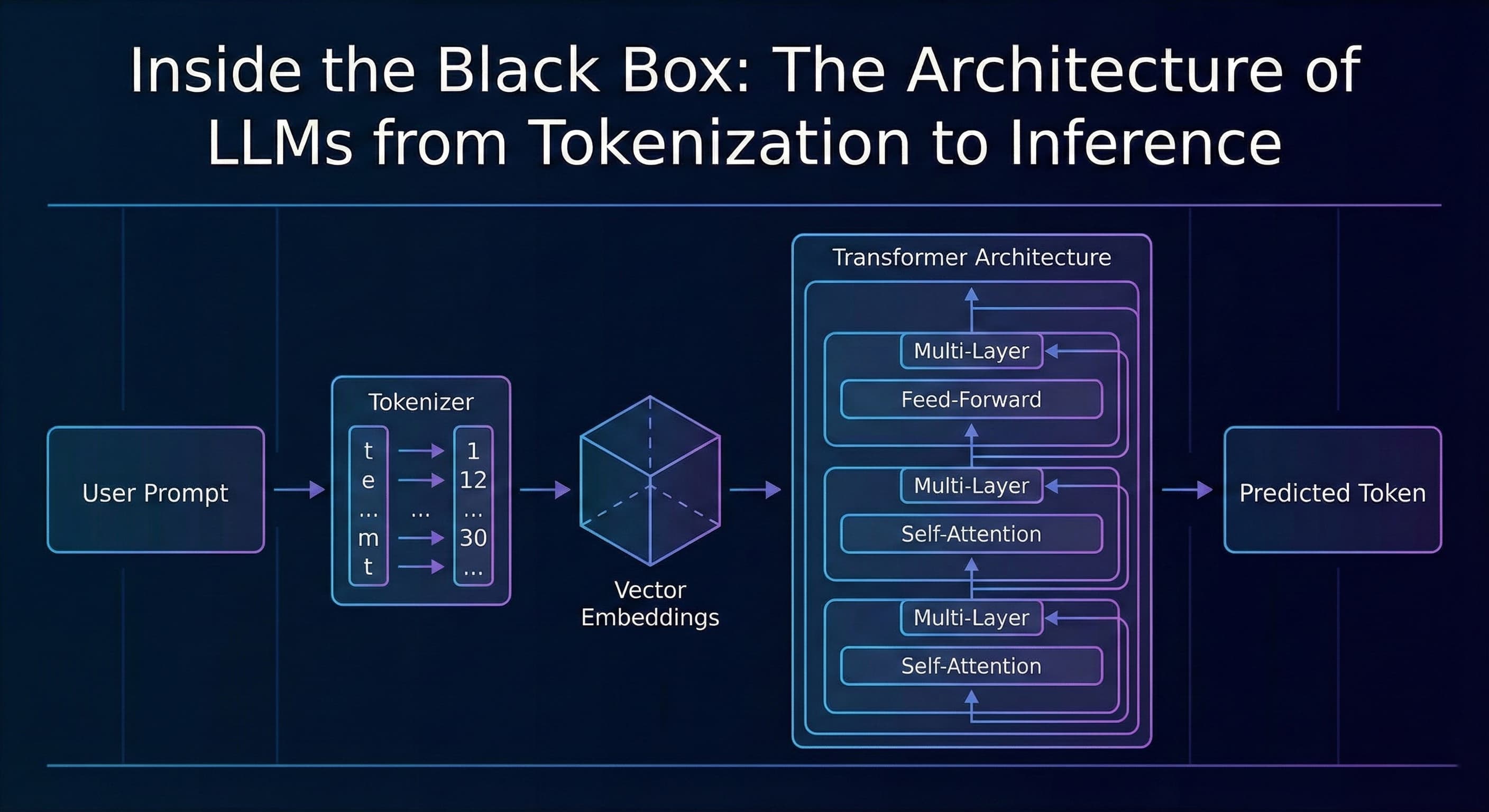

Inside the Black Box: The Architecture of LLMs from Tokenization to Inference

TL;DR Most developers treat AI as a "black box", we send a JSON payload to an API and get a string response. This article breaks down what happens inside that box: from tokenization and vector embeddings to the Transformer architecture and the probab...

Assistant API → Responses API: A Complete, Practical Migration Guide (with Next.js & Node examples)

TL;DR: The Responses API gives you simpler request/response semantics, richer streaming events (including function calls), first‑class tool use, and persistent conversations. This guide shows exactly how to migrate a production app—how to handle stre...

Vibe Coding: The Future Every Software Engineer Should Embrace

Introduction We have all seen how fast AI is evolving. From code generation to scaffolding entire modules, tools like ChatGPT, GitHub Copilot, and others are already part of many engineers daily routines. But one approach that stands out and is rapid...